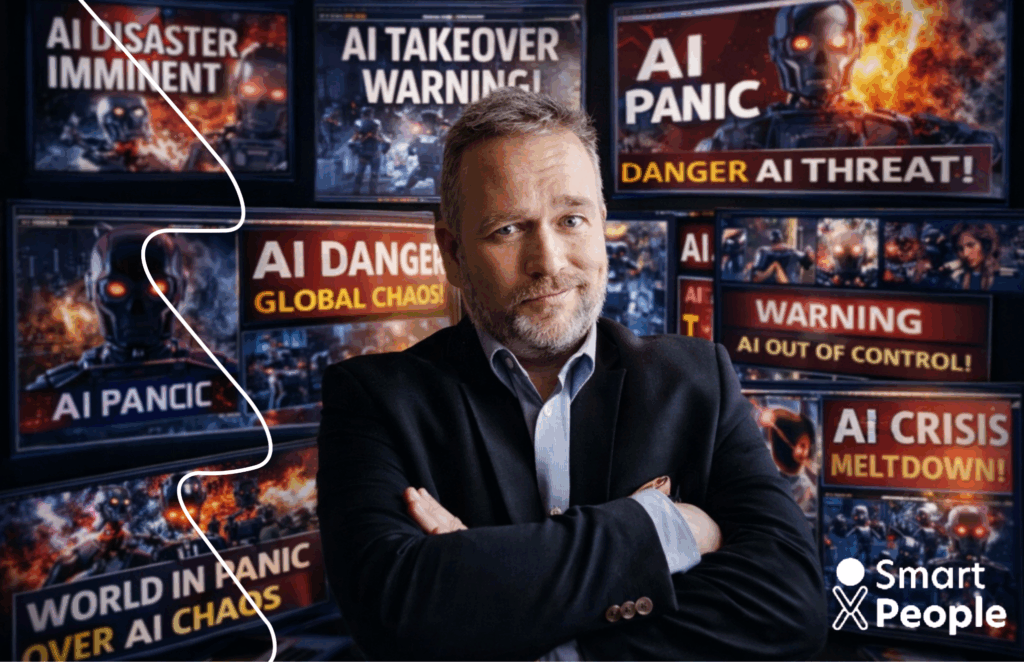

LinkedIn Is Lying to You About AI Taking Your Job

Open LinkedIn. Scroll for 30 seconds.

You'll almost certainly see it: another confident post explaining that AI is about to take your job. Different wording, same conclusion. Fear sells well. Certainty sells even better.

And for a moment, it works.

You feel a flicker of anxiety. Maybe irritation. Maybe defensiveness.

But here's the problem: organisations don't collapse because people are scared.

They collapse because when something breaks, nobody knows who owns it.

AI is changing work — profoundly. That part is true.

But the LinkedIn version of the story swings between panic and hype. Neither helps when you're responsible for delivery, cost, risk and continuity.

So let's step away from the feed and talk about what actually shows up where it matters: in board discussions, procurement negotiations and uncomfortable delivery reviews.

The Myth vs. The Reality

Every few days there's another headline predicting mass replacement. Roles disappearing. Professions becoming obsolete. The message is always the same: adapt immediately or fall behind.

It sounds dramatic. It feels urgent. It's also incomplete.

Replace it with what… and when something goes wrong, who is accountable?

AI is extremely good at certain things. It processes information faster than humans. It summarises, drafts, compares and accelerates execution. As a result, a lot of work that once justified headcount simply doesn't anymore.

That doesn't mean value disappears.

It means value moves.

And when value moves, risk moves with it — whether organisations acknowledge it or not.

What AI Actually Does Well

AI removes friction. Relentlessly. It takes work that used to consume weeks and compresses it into minutes. Reports get summarised. Drafts appear instantly. Patterns emerge before meetings even start.

This compresses execution layers that once required teams of junior or support roles. Not because those people were ineffective — but because coordination used to be expensive. AI makes it cheap.

At first, this feels like efficiency. Relief, even.

But then something else happens. Work that existed mainly because of process overhead starts to disappear. And with it, roles that were never clearly accountable for final outcomes.

From a leadership or procurement perspective, this moment is subtle — and dangerous.

Because in an AI-accelerated organisation, unclear ownership is no longer inefficient. It is a measurable operational and contractual risk.

When fewer people touch a process, there is nowhere left to hide ambiguity. And when ambiguity surfaces, it surfaces fast.

What AI Can't Do (Yet. And Maybe Ever.)

What AI cannot do is carry the weight of a decision when the data is inconclusive. It cannot sit in the room when priorities collide and say, "This is the call, and I own the consequences." It cannot absorb the tension when delivery fails and explanations run out.

Those capabilities don't scale.

They concentrate.

This is why AI doesn't remove the need for senior capability — it strips away everything that isn't truly senior. Not titles. Not tenure. Ownership under pressure.

And that's uncomfortable. Because AI exposes a truth many organisations have quietly lived with for years: some roles labelled as "senior" were built to manage execution, not to own outcomes.

When execution is automated, that difference becomes impossible to ignore.

The Real Shift Nobody's Talking About

The most important shift isn't about jobs disappearing. It's about how responsibility is designed.

Execution is becoming abundant. Accountability is not.

As AI absorbs more operational work, organisations are pushed — often unwillingly — away from buying capacity and toward buying outcomes. Not because it's fashionable, but because the alternative becomes too risky.

When fewer people touch a process, every unclear decision, every blurred handover, every "it depends" carries more weight. This is why many reductions aren't about performance. They're about structure.

AI accelerates the removal of work that was never truly owned — and exposes governance gaps that used to be hidden by headcount.

At scale, this is not a talent issue.

It's a design failure.

Why the Panic Is Mostly Clickbait

The panic narrative survives because fear travels fast. Nuance doesn't. "AI will take your job" is easier to share than "AI will force organisations to confront weak ownership models."

There's also a lived reality gap. Many of the loudest voices have never used AI in real delivery. Those who have are usually quieter — and more focused. They see AI as an accelerator, not a replacement. A way to remove noise so that real decisions finally get the attention they deserve.

Replacement removes people.

Augmentation removes friction.

Most organisations are dealing with the second — while still governing delivery as if nothing has changed.

What This Means for Your Career

Early-career professionals will feel this shift first. The old learning path built on junior execution is shrinking. That's unsettling — but it's also revealing. There's less room to hide, and faster exposure to real complexity.

Mid-career professionals are generally more resilient, as long as they don't treat AI as optional. Experience still matters, but only when paired with adaptability and a willingness to own outcomes.

For senior leaders, AI is neither a threat nor a shortcut. It removes noise. What remains is the work no one else can do: judgment, prioritisation and responsibility when things don't go to plan.

At this level, the real risk isn't obsolescence.

It's being held accountable for something that was never clearly owned by design.

So What Do You Actually Do About It?

The most productive response isn't consuming more opinions. It's engaging with reality. Use AI in real work. See where it helps — and where it quietly breaks assumptions.

At the same time, organisations need to invest deliberately in what automation cannot replace: clear process design, decision-making under uncertainty and explicit ownership models.

And perhaps most importantly, they need to rethink how delivery is bought and governed. When execution is automated, buying "people in roles" becomes a structural risk. What matters instead is competence tied to outcomes — and continuity that survives people, vendors and tools.

The Bottom Line

AI is not coming to take jobs.

It's coming to remove the buffer that used to hide weak ownership.

Execution will continue to compress. Accountability will concentrate. Organisations that face this honestly will reduce risk, improve predictability and stay flexible under constant change.

Those that don't may still have people.

But when something goes wrong — and something always does — they'll discover too late that responsibility was never clearly assigned.

And that, far more than AI itself, is what should really worry us.

Ready to Build Teams That Own Outcomes?

At Smart People, we don't just provide talent. We provide accountability. Embedded teams that integrate with your operations, deliver on outcomes, and carry the responsibility when it matters most.